The story of today is written in the decisions of yesterday

The history of our remarkable world is written in the sometimes small and seemingly innocuous decisions made by individuals in the pursuit of their goals. These decisions have forged alliances, taken lives, broken hearts, created works of art, started wars, and ultimately, have radically reshaped the trajectory of human history.

Explore these decisions and how they created a dramatic shift in the fortunes of the society that trailed in their wake.

Feature Article

Recommended Reading

US History Timeline: The Dates of America’s Journey

When compared to other powerful nations such as France, Spain, and the United Kingdom, the…

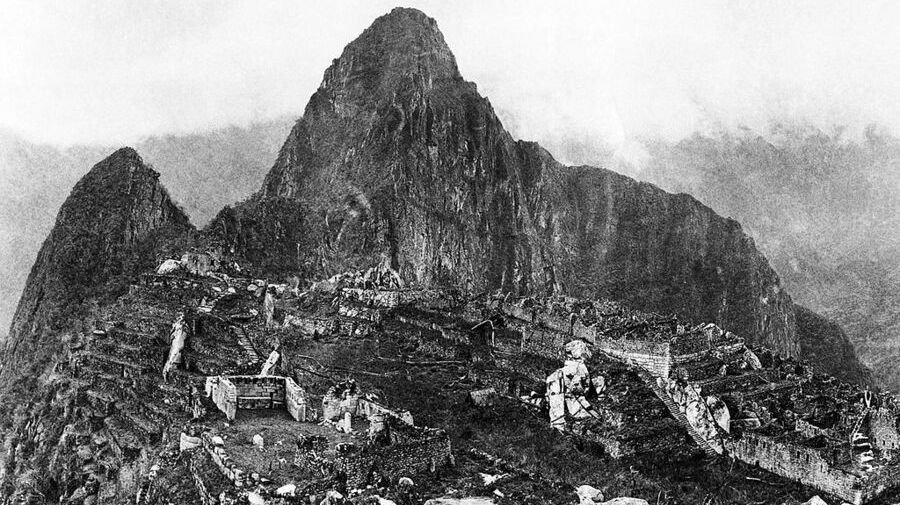

Ancient Civilizations Timeline: The Complete List from Aboriginals to Incans

Ancient civilizations continue to fascinate. Despite rising and falling hundreds if not thousands of years…

The Mason-Dixon Line: What Is It? Where is it? Why is it Important?

The British men in the business of colonizing the North American continent were so sure…

The Complete History of Social Media: A Timeline of the Invention of Online Networking

Social media has become an integral part of all of our lives. We use it…

iPhone History: Every Generation in Timeline Order 2007 – 2023

Every generation or two, a technological advancement is made that is so significant it radically…

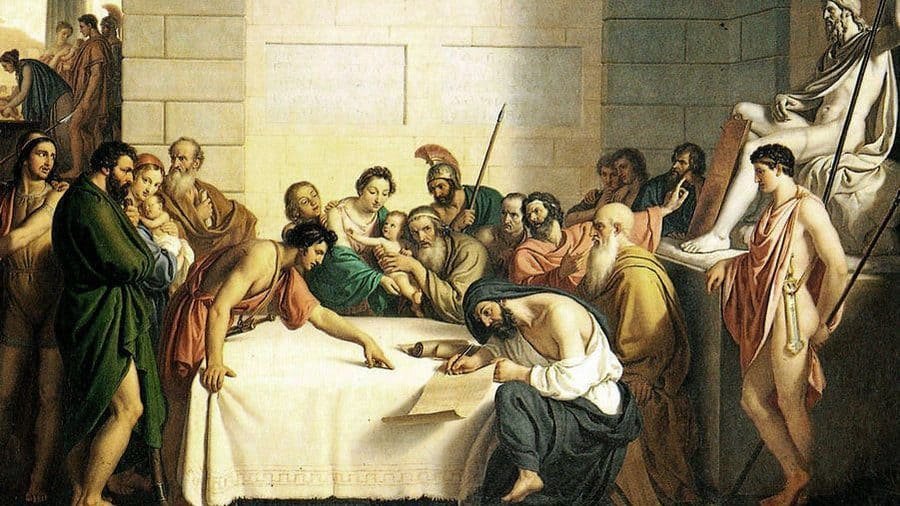

Ancient Sparta: The History of the Spartans

Ancient Sparta is one of the most well-known cities in Classical Greece. The Spartan society…

“A people without knowledge of their past history, origin, and culture, is like a tree without roots.”

Marcus Garvey

Latest Articles

The Greek God Family Tree: A Complete Family Tree of All Greek Deities

The Greek god family tree is extremely complex. The standard lines drawn between generations often…

Who Invented the CNC Machine? The History of Computer Numerical Control (CNC) Machinery

The invention of CNC machinery revolutionized the manufacturing industry, enabling the automated production of complex…

Who Invented Water? History of the Water Molecule

In the vast expanse of the cosmos, amidst swirling galaxies and celestial wonders, one seemingly…

Who Invented Meth? The Surprising History Revealed

Methamphetamine, commonly referred to as meth, has a history marked by intricacies and evolution. While…

Explore a complete list of our latest articles here.

Explore Topics

BIOGRAPHIES

US HISTORY

GODS AND GODDESSES

GODS AND GODDESSES

ANCIENT HISTORY

- The Cradle of Civilization: Mesopotamia and the First Civilizations

- Petronius Maximus

- Tiberius Gracchus

- Odysseus: Greek Hero of the Odyssey

HEALTH

Popular Articles

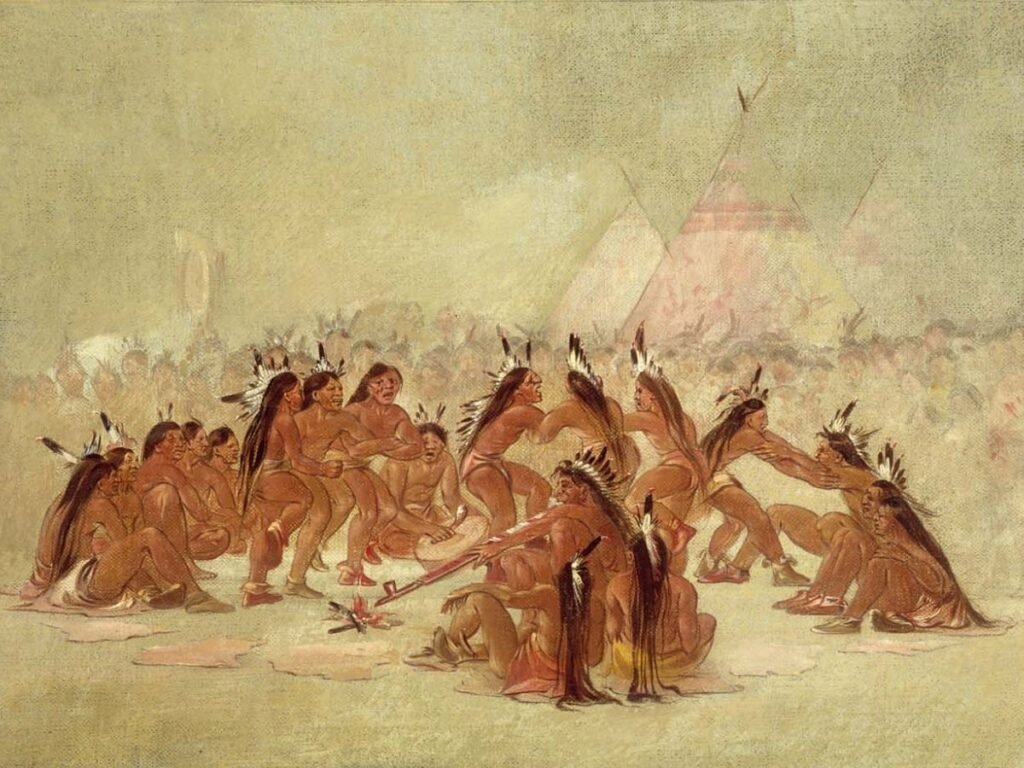

Native American Gods and Goddesses: Deities from Different Cultures

From Ussen and Apistotoki to Chethl and Tulukaruq, there are many Native American gods and…

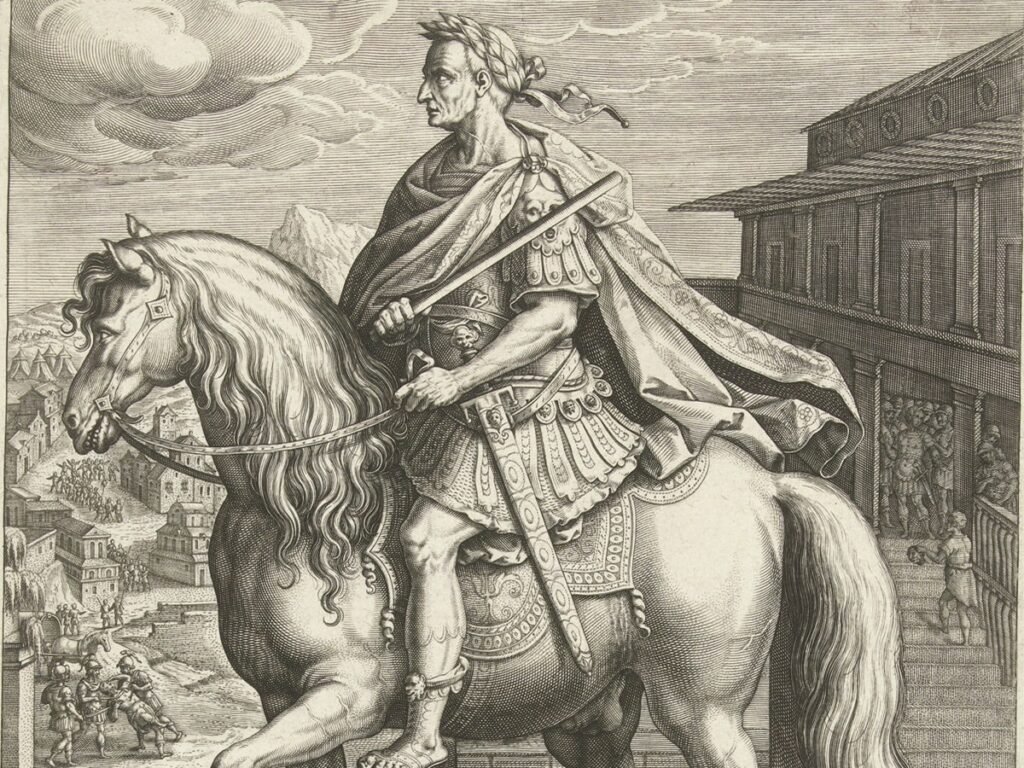

Galba: The Life and Death of One of the Most Unpopular Emperors of Rome

The history of Rome is replete with tales of glory and downfall, of emperors beloved…

Nazis & America: The USA’s Fascist Past

World War II has a nostalgia to it for Americans (and I’m allowed to say…

History of Water Treatment from Ancient Civilizations to Modern Times

The history of water treatment is a tale of human ingenuity and necessity, driven by…

Luna Goddess: The Majestic Roman Moon Goddess

Luna goddess is the Roman goddess of the moon, often associated with nocturnal magic, secrets,…

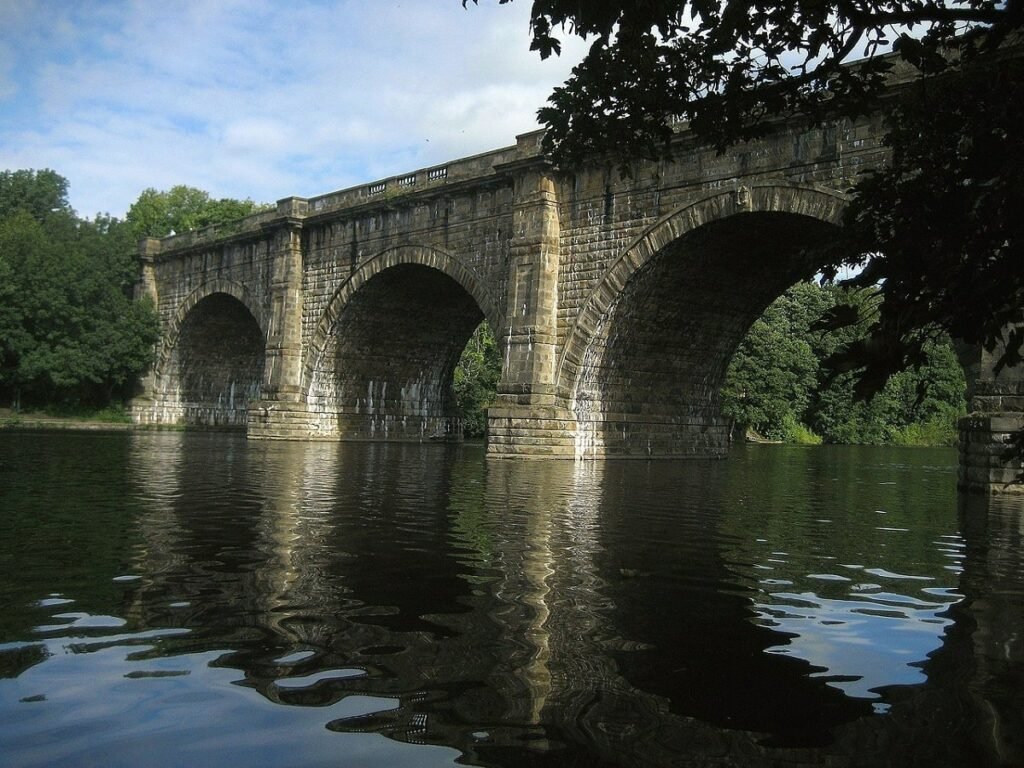

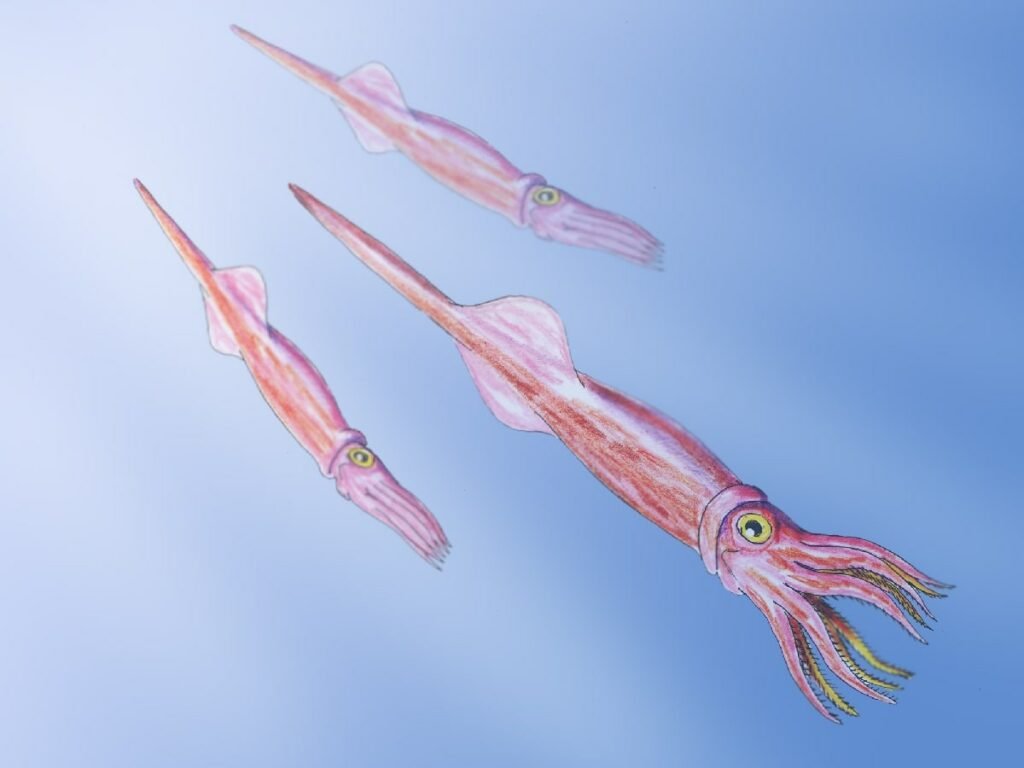

Belemnite Fossils and the Story They Tell of the Past

Belemnite fossils are the most prevalent fossils that remain from the Jurassic and Cretaceous age;…