From the Moon Landing to M*A*S*H, from the Olympics to “The Office,” some of the most critical moments in history and culture have been experienced worldwide thanks to the wondrous invention of television.

The evolution of television has been one full of slow, steady progress. However, there have been definitive moments that have changed technology forever. The first TV, the first “broadcast” of live events to screen, the introduction of “the television show,” and the Streaming Internet have all been significant leaps forward in how television works.

Today, television technology is an integral part of telecommunications and computing. Without it, we would be lost.

Table of Contents

What Is a Television System?

It’s a simple question with a surprisingly complex answer. At its core, a “television” is a device that takes electrical input to produce moving images and sound for us to view. A “television system” would be both what we now call television and the camera/producing equipment that captured the original images.

The Etymology of “Television”

The word “television” first appeared in 1907 in the discussion of a theoretical device that transported images across telegraph or telephone wires. Ironically, this prediction was behind the times, as some of the first experiments into television used radio waves from the beginning.

“Tele-” is a prefix that means “far off” or “operating at a distance.” The word “television” was agreed upon quite rapidly, and while other terms like “iconoscope” and “emitron” referred to patented devices that were used in some electronic television systems, television is the one that stuck.

Today, the word “television” takes a slightly more fluid meaning. A “television show” is often considered a series of small entertainment pieces with a throughline or overarching plot. The difference between television and movies is found in the length and serialization of the media, rather than the technology used to broadcast it.

“Television” is now as often watched on phones, computers, and home projectors as it is on the independent devices we call “television sets.” In 2017, only 9 percent of American adults watched television using an antenna, and 61 percent watched it directly from the internet.

The Mechanical Television System

The first device you could call a “television system” under these definitions was created by John Logie Baird. A Scottish engineer, his mechanical television used a spinning “Nipkow disk,” a mechanical device to capture images and convert them to electrical signals. These signals, sent by radio waves, were picked up by a receiving device. Its own disks would spin similarly, illuminated by a neon light to produce a replica of the original images.

Baird’s first public demonstration of his mechanical television system was somewhat prophetically held at a London Department store way back in 1925. Little did he know that television systems would be carefully intertwined with consumerism throughout history.

The evolution of the mechanical television system progressed rapidly and, within three years, Baird’s invention was able to broadcast from London to New York. By 1928, the world’s first television station opened under the name W2XCW. It transmitted 24 vertical lines at 20 frames a second.

Of course, the first device that we today would recognize as television involved the use of Cathode Ray Tubes (CRTs). These convex glass-in-box devices shared images captured live on camera, and the resolution was, for its time, incredible.

This modern, electronic television had two fathers working simultaneously and often against each other. They were Philo Farnsworth and Vladimir Zworykin.

Who Invented the First TV?

Traditionally, a self-taught boy from Idaho named Philo Farnsworth is credited for having invented the first TV. But another man, Vladimir Zworykin, also deserves some of the credit. In fact, Farnsworth could not have completed his invention without the help of Zworykin.

How the First Electronic Television Camera Came to Be

Philo Farnsworth claimed to have designed the first electronic television receiver at only 14. Regardless of those personal claims, history records that Farnsworth, at only 21, designed and created a functioning “image dissector” in his small city apartment.

The image dissector “captured images” in a manner not too dissimilar to how our modern digital cameras work today. His tube, which captured 8,000 individual points, could convert the image to electrical waves with no mechanical device required. This miraculous invention led to Farnsworth creating the first all-electronic television system.

Zworykin’s Role in the Developing the First Television

Having escaped to America during the Russian Civil War, Vladimir Zworykin found himself immediately employed by Westinghouse’s electrical engineering firm. He then set to work patenting work he had already produced in showing television images via a Cathode Ray Tube (CRT). He had not, at that point, been able to capture images as well as he could show them.

By 1929, Zworykin worked for the Radio Corporation of America (owned by General Electric and soon to form the National Broadcasting Company). He had already created a simple color television system. Zworykin was convinced that the best camera would also use CRT but never seemed to make it work.

When Was TV Invented?

Despite protestations from both men and multiple drawn-out legal battles over their patents, RCA eventually paid royalties to use Farnsworth’s technology to transmit to Zorykin’s receivers. In 1927, the first TV was invented. For decades after, these electronic televisions changed very little.

When Was The First Television Broadcast?

The first television broadcast was by Georges Rignoux and A. Fournier in Paris in 1909. However, this was the broadcast of a single line. The first broadcast that general audiences would have been wowed by was on March 25, 1925. That is the date John Logie Baird presented his mechanical television.

When television began to change its identity from the engineer’s invention to the new toy for the rich, broadcasts were few and far between. The first television broadcasts were of King George VI’s coronation. The coronation was one of the first television broadcasts to be filmed outside.

In 1939, the National Broadcasting Company (NBC) broadcasted the opening of New York’s World’s Fair. This event included a speech from Franklin D. Roosevelt and an appearance by Albert Einstein. By this point, NBC had a regular broadcast of two hours every afternoon and was watched by approximately nineteen thousand people around New York City.

The First Television Networks

The First Television Network was The National Broadcasting Company, a subsidiary of The Radio Corporation of America (or RCA). It started in 1926 as a series of Radio stations in New York and Washington. NBC’s first official broadcast was on November 15, 1926.

NBC started to regularly broadcast television after the 1939 New York World’s Fair. It had approximately one thousand viewers. From this point on, the network would broadcast every day and continues to do so now.

The National Broadcasting Company kept a dominant position among television networks in the United States for decades but always had competition. The Columbia Broadcasting System (CBS), which had also previously broadcast in radio and mechanical television, turned to all-electronic television systems in 1939. In 1940, it became the first television network to broadcast in color, albeit in a one-off experiment.

The American Broadcasting Company (ABC) was forced to break off from NBC to form its own television network in 1943. This was due to the FCC being concerned that a monopoly was occurring in television.

The three television networks would rule television broadcasting for forty years without competition.

In England, the publicly-owned British Broadcasting Corporation (or BBC) was the only television station available. It started broadcasting television signals in 1929, with John Logie Baird’s experiments, but the official Television Service did not exist until 1936. The BBC would remain the only network in England until 1955.

The First Television Productions

The first made-for-television drama would arguably be a 1928 drama called “The Queen’s Messenger,” written by J. Harley Manners. This live drama presentation included two cameras and was lauded more for the technological marvel than anything else.

The first news broadcasts on television involved news readers repeating what they just had broadcast on radio.

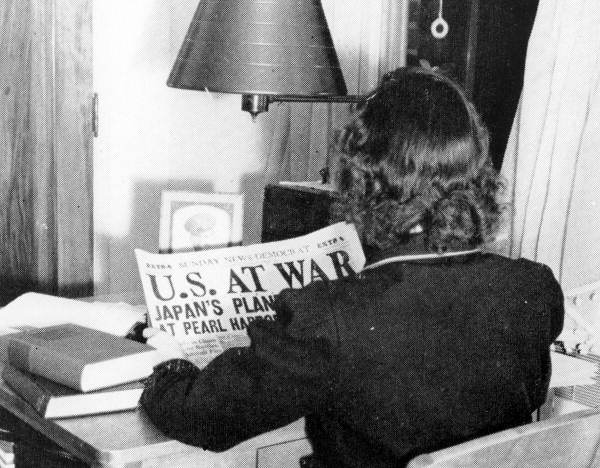

On December 7, 1941, Ray Forrest, one of the first full-time news announcers for television, presented the first news bulletin. The first time that “regularly scheduled programs” were interrupted, his bulletin announced the attack on Pearl Harbor.

This special report for CBS ran for hours, with experts coming into the studio to discuss everything from geography to geopolitics. According to a report CBS gave to the FCC, this unscheduled broadcast “was unquestionably the most stimulating challenge and marked the greatest advance of any single problem faced up to that time.”

After the war, Forrest went on to host one of the first cooking shows on television, “In the Kelvinator Kitchen.”

When Was the First TV Sold?

The first television sets available for anyone were manufactured in 1934 by Telefunken, a subsidiary of the electronics company Siemens. RCA began manufacturing American sets in 1939. They cost around $445 dollars at the time (the American average salary was $35 per month).

TV Becomes Mainstream: The Post-War Boom

After the Second World War, a newly invigorated middle class caused a boom in sales of television sets, and television stations began to broadcast around the clock worldwide.

By the end of the 1940s, audiences were looking to get more from television programming. While news broadcasts would always be important, audiences looked for entertainment that was more than a play that happened to be caught on camera. Experiments from major networks led to significant changes in the type of television programs in existence. Many of these experiments can be seen in the shows of today.

What Was the First TV Show?

The first regularly broadcast TV show was a visual version of the popular radio series, “Texaco Star Theatre.” It began tv broadcasts on June 8, 1948. By this time, there were nearly two hundred thousand television sets in America.

The Rise of The Sitcom

In 1947, DuMont Television Network (partnered with Paramount Pictures) began to air a series of teledramas starring real-life couple Mary Kay and Johnny Stearns. “Mary Kay and Johnny” featured a middle-class American couple facing real-life problems. It was the first show on television to show a couple in bed, as well as a pregnant woman. It was not only the first “sitcom” but the model for all the great sitcoms since.

Three years later, CBS hired a young female actor called Lucille, who had previously been known in Hollywood as “The Queen of the B (movies).” Initially trying her out in other sitcoms, she eventually convinced them that their best show would include her partner, just as Mary Kay and Johnny had.

The show, entitled “I Love Lucy,” became a runaway success and is now considered a cornerstone of television.

Today, “I Love Lucy” has been described as “legitimately the most influential in TV history.” The popularity of reruns led to the concept of “syndication,” an arrangement in which other television stations could purchase the rights to screen reruns of the show.

According to CBS, “I Love Lucy” still makes the company $20 Million a year. Lucille Ball is now considered one of the most important names in the history of the medium.

The “sitcom,” derived from the phrase “situational comedy,” is still one of the most popular forms of television programming.

In 1983, the final episode of the popular sitcom “M*A*S*H” had over one hundred million viewers glued to their screens, a number not beaten for nearly thirty years.

In 1997, Jerry Seinfeld would become the first sit-com star to earn a million dollars per episode. “It’s Always Sunny in Philadelphia”, a sitcom about the immoral and crazy owners of a bar, is the longest-running live sitcom ever, now into its 15th season.

When Did Color TV Come Out?

The ability of television systems to broadcast and receive color occurred relatively early in the evolution of electronic television. Patents for color television existed from the late nineteenth century, and John Baird regularly broadcast from a color television system in the thirties.

The National Television System Committee (NTSC) met in 1941 to develop a standardized system for television broadcasts, ensuring that all television stations used similar systems to ensure that all television systems could receive them. The committee, created by the Federal Communications Commission (FCC), would meet again only twelve years later to agree upon a standard for color television.

However, a problem faced by television networks was that color broadcasting required extra radio bandwidth. This bandwidth, the FCC decided, needed to be separate from that which sent black and white television in order for all audiences to receive a broadcast. This NTSC standard was first used for the “Tournament of Roses Parade” in 1954. The color viewing was available to so few systems as a particular receiver was required.

The First TV Remote Control

While the first remote controls were intended for military use, controlling boats and artillery from a distance, entertainment providers soon considered how radio and television systems might use the technology.

What Was The First TV Remote?

The first remote control for television was developed by Zenith in 1950 and was called “Lazy Bones.” It had a wired system and only a single button, which allowed for the changing of channels.

By 1955, however, Zenith had produced a wireless remote that worked by shining light at a receiver on the television. This remote could change channels, turn the tv on and off, and even change the sound. However, being activated by light, ordinary lamps, and sunlight could unintentionally act on the television.

While future remote controls would use ultrasonic frequencies, the use of infra-red light ended up being the standard. The information sent from these devices was often unique to the television system but could offer complex instructions.

Today, all television sets are sold with remote controls as standard, and an inexpensive “universal remote” can be purchased easily online.

The Tonight Show and Late Night Television

After starring in the first American sitcom, Johnny Stearns continued on television by being one of the producers behind “Tonight, Starring Steve Allen,” now known as “The Tonight Show.” This late-night broadcast is the longest-running television talk show still running today.

Prior to “The Tonight Show,” talk shows were already growing popular. “The Ed Sullivan Show” opened in 1948 with a premier that included Dean Martin, Jerry Lewis, and a sneak preview of Rodgers and Hammerstein’s “South Pacific.” The show featured serious interviews with its stars and Sullivan was known to have little respect for the young musicians that performed on his show. “The Ed Sullivan Show” lasted until 1971 and is now most remembered for being the show that introduced the United States to “Beatlemania“.

“The Tonight Show” was a more low-brow affair compared to Sullivan, and popularized a number of elements found today in late-night television; opening monolog, live bands, sketch moments with guest stars, and audience participation all found their start in this program.

While popular under Allen, “The Tonight Show” really became a part of history during its epic three-decade run under Johnny Carson. From 1962 to 1992, Carson’s program was less about the intellectual conversation with guests than it was about promotion and spectacle. Carson, to some, “define[d] in a single word what made television different from theater or cinema.”

The Tonight Show still runs today, hosted by Jimmy Fallon, while contemporary competitors include “The Late Show” with Stephen Colbert and “The Daily Show” with Trevor Noah.

Digital Television Systems

Starting with the first TV, television broadcasts were always analog, which means the radio wave itself contains the information the set needs to create a picture and sound. Image and sound would be directly translated into waves via “modulation” and then reverted back by the receiver through “demodulation”.

A digital radio wave doesn’t contain such complex information, but alternates between two forms, which can be interpreted as zeros and ones. However, this information needs to be “encoded” and “recoded.”

With the rise of low-cost, high-power computing, engineers experimented with the digital broadcast. Digital broadcast “decoding” could be done by a computer chip within the tv set which breaks down the waves into discrete zeroes and ones.

While this could be used to produce greater image quality and clearer audio, it would also require a much higher bandwidth and computing power that was only available in the seventies. The bandwidth required was improved over time with the advent of “compression” algorithms, and television networks could broadcast greater amounts of data to televisions at home.

Digital broadcast of television via cable television began in the mid-nineties, and as of July 2021, no television station in the United States broadcasts in analog.

VHS Brings the Movies to TV

For a very long time, what you saw on television was decided by what the television networks decided to broadcast. While some wealthy people could afford film projectors, the large box in the living room could only show what someone else wanted it to.

Then, in the 1960s, electronics companies began to provide devices that could “record television” onto electromagnetic tapes, which could then be watched through the set at a later time. These “Video Cassette Recorders” were expensive but desired by many. The first Sony VCR cost the same as a new car.

In the late seventies, two companies faced off to determine the standard of home video cassettes in what some referred to as a “format war.”

Sony’s “Betamax” eventually lost to JVC’s “VHS” format due to the latter company’s willingness to make their standard “open” (and not require licensing fees).

VHS machines quickly dropped in price, and soon most homes contained an extra piece of equipment. Contemporary VCRs could record from the television and played portable tapes with other recordings. In California, businessman George Atkinson purchased a library of fifty movies directly from movie companies and then proceeded to start a new industry.

The Birth of Video Rental Companies

For a fee, customers could become members of his “Video Station”. Then, for an additional cost, they could borrow one of the fifty movies to watch at home, before returning. So began the era of the video rental company.

Movie studios were concerned by the concept of home video. They argued that giving people the ability to copy to tape what they are shown constituted theft. These cases reached the Supreme Court, which eventually decided that recording for home consumption was legal.

Studios replied by creating licensing agreements to make video rental a legitimate industry and produce films specifically for home entertainment.

While the first “direct to video” movies were low-budget slashers or pornography, the format became quite popular after the success of Disney’s “Aladdin: Return of Jafar.” This sequel to the popular animated movie sold 1.5 Million copies in its first two days of release.

READ MORE: The Dawn of Desire: Who Invented Porn?

Home video changed slightly with the advent of digital compression and the rise of optical disc storage.

Soon, networks and film companies could offer high-quality digital television recordings on Digital Versatile Discs (or DVDs). These discs were introduced in the mid-nineties but soon were superseded by high-definition discs.

As possible evidence of karma, it was Sony’s “Blu-Ray” system that won against Toshiba’s “HG DVD” in home video’s second “Format War.” Today, Blu-Rays are the most popular form of physical purchase for home entertainment.

READ MORE: The First Movie Ever Made

First Satellite TV

On July 12, 1962, the Telstar 1 satellite beamed images sent from Andover Earth Station in Maine to the Pleumeur-Bodou Telecom Center in Brittany, France. So marked the birth of satellite television. Only three years later, the first commercial satellite for the purposes of broadcasting was sent into space.

Satellite television systems allowed television networks to broadcast around the world, no matter how far from the rest of society a receiver might be. While owning a personal receiver was, and still is, far more expensive than conventional television, networks took advantage of such systems to offer subscription services that were not available to public consumers. These services were a natural evolution of already existing “cable channels” such as “Home Box Office,” which relied on direct payment from consumers instead of external advertising.

The first live satellite broadcast that was watchable worldwide occurred in June 1967. BBC’s “Our World” employed multiple geostationary satellites to beam a special entertainment event that included the first public performance of “All You Need is Love” by The Beatles.

The Constant Rise and Fall of 3D Television

It is a technology with a long history of attempts and failures and which will likely return one day. “3D Television” refers to television that conveys depth perception, often with the aid of specialized screens or glasses.

It may come as no surprise that the first example of 3D television came from the labs of John Baird. His 1928 presentation bore all the hallmarks of future research into 3D television because the principle has always been the same. Two images are shown at slightly different angles and differences to approximate the different images our two eyes see.

While 3D films have come and gone as gimmicky spectacles, the early 2010s saw a significant spark of excitement for 3D television — all the spectacle of the movies at home. While there was nothing technologically advanced about screening 3D television, broadcasting it required more complexity in standards. At the end of 2010, the DVB-3D standard was introduced, and electronics companies around the world were clambering to get their products into homes.

However, like the 3D crazes in movies every few decades, the home viewer soon grew tired. While 2010 saw the PGA Championship, FIFA World Cup, and Grammy Awards all filmed and broadcast in 3D, channels began to stop offering the service only three years later. By 2017, Sony and LG officially announced they would no longer support 3D for their products.

Some future “visionary” will likely take another shot at 3D television but, by then, there is a very good chance that television will be something very different indeed.

LCD/LED Systems

During the late twentieth century, new technologies arose in how television could be presented on the screen. Cathode Ray Tubes had limitations in size, longevity, and cost. The invention of low-cost microchips and the ability to manufacture quite small components led TV manufacturers to look for new technologies.

Liquid Crystal Display (LCD) is a way to present images by having a backlight shine through millions (or even billions) of crystals that can be individually made opaque or translucent using electricity. This method allows the display of images using devices that can be very flat and use little electricity.

While popular in the 20th century for use in clocks and watches, improvements in LCD technology let them become the next way to present images for television. Replacing the old CRT meant televisions were lighter, thinner, and inexpensive to run. Because they did not use phosphorous, images left on the screen could not “burn-in”.

Light Emitting Diodes (LEDs) use extremely small “diodes” that light up when electricity passes through them. Like LCD, they are inexpensive, small, and use little electricity. Unlike LCD, they need no backlight. Because LCDs are cheaper to produce, they have been the popular choice in the early 21st century. However, as technology changes, the advantages of LED may eventually lead to it taking over the market.

The Internet Boogeyman

The ability for households to have personal internet access in the nineties led to fear among those in the television industry that it might not be around forever. While many saw this fear as similar to the rise of VHS, others took advantage of the changes.

With internet speeds increasing, the data that was previously sent to the television via radio waves or cables could not be sent through your telephone line. The information you would once need to record onto a video cassette could be “downloaded” to watch in the future. People began acting “outside of the law,”very much like the early video rental stores.

Then, when internet speed reached a point fast enough, something unusual happened.

“Streaming Video” and the rise of YouTube

In 2005, three former employees of the online financial company PayPal created a website that allowed people to upload their home videos to watch online. You didn’t need to download these videos but could watch them “live” as the data was “streamed” to your computer. This means you did not need to wait for a download or use up hard-drive space.

Videos were free to watch but contained advertising and allowed content creators to include ads for which they would be paid a small commission. This “partner program” encouraged a new wave of creators who could make their own content and gain an audience without relying on television networks.

The creators offered a limited release to interested people, and by the time the site officially opened, more than two million videos a day were being added.

Today, creating content on YouTube is big business. With the ability for users to “subscribe” to their favorite creators, the top YouTube stars can earn tens of millions of dollars a year.

Netflix, Amazon, and the New Television Networks

In the late nineties, a new subscription video rental service formed that was seemingly like all those who came after George Atkinson. It had no physical buildings but would rely on people returning the video in the mail before renting the next one. Because videos now came on DVD, postage was cheap, and the company soon rivaled the most prominent video rental chains.

Then in 2007, as people were paying attention to the rise of YouTube, the company took a risk. Using the rental licenses it already had to lend out its movies, it placed them online for consumers to stream directly. It started with 1,000 titles and only allowed 18 hours of streaming per month. This new service was so popular that, by the end of the year, the company had 7.5 million subscribers.

The problem was that, for Netflix, they relied on the same television networks that their company was damaging. If people watched their streaming service more than traditional television, networks would need to increase their fee for licensing their shows to rental companies. In fact, if a network decided to no longer license its content to Netflix, there would be little the company could do.

So, the company started to produce its own material. It hoped to attract even more viewers by investing a large amount of money on new shows like “Daredevil” and the US remake of “House of Cards.” The latter series, which ran from 2013 to 2018, won 34 Emmys, cementing Netflix as a competitor in the television network industry.

In 2021, the company spent $17 Billion on original content and continued to decrease the amount of content purchased from the three major networks.

Other companies took note of the success of Netflix. Amazon, which started life as an online bookstore, and became one of the largest e-commerce platforms globally, began to produce its own original in the same year as Netflix and has since been joined by dozens of other services around the world.

The Future of Television

In some ways, those who feared the internet were right. Today, streaming takes up over a quarter of the audience’s viewing habits, with this number rising every year.

However, this change is less about the media and more about the technology that accesses it. Mechanical Televisions are gone. Analog broadcasts are gone. Eventually, radio-broadcasted television will disappear as well. But television? Those half-hour and one-hour blocks of entertainment, they are not going anywhere.

The most-watched streaming programs of 2021 include dramas, comedies, and, just like at the beginning of television history, cooking shows.

While slow to react to the internet, the major networks all now have their own streaming services, and new advances in fields like virtual reality mean that television will continue to evolve well into our future.